Efficient Regional Memory Network for Video Object Segmentation

Haozhe Xie, Hongxun Yao, Shangchen Zhou, Shengping Zhang, Wenxiu Sun

Abstract

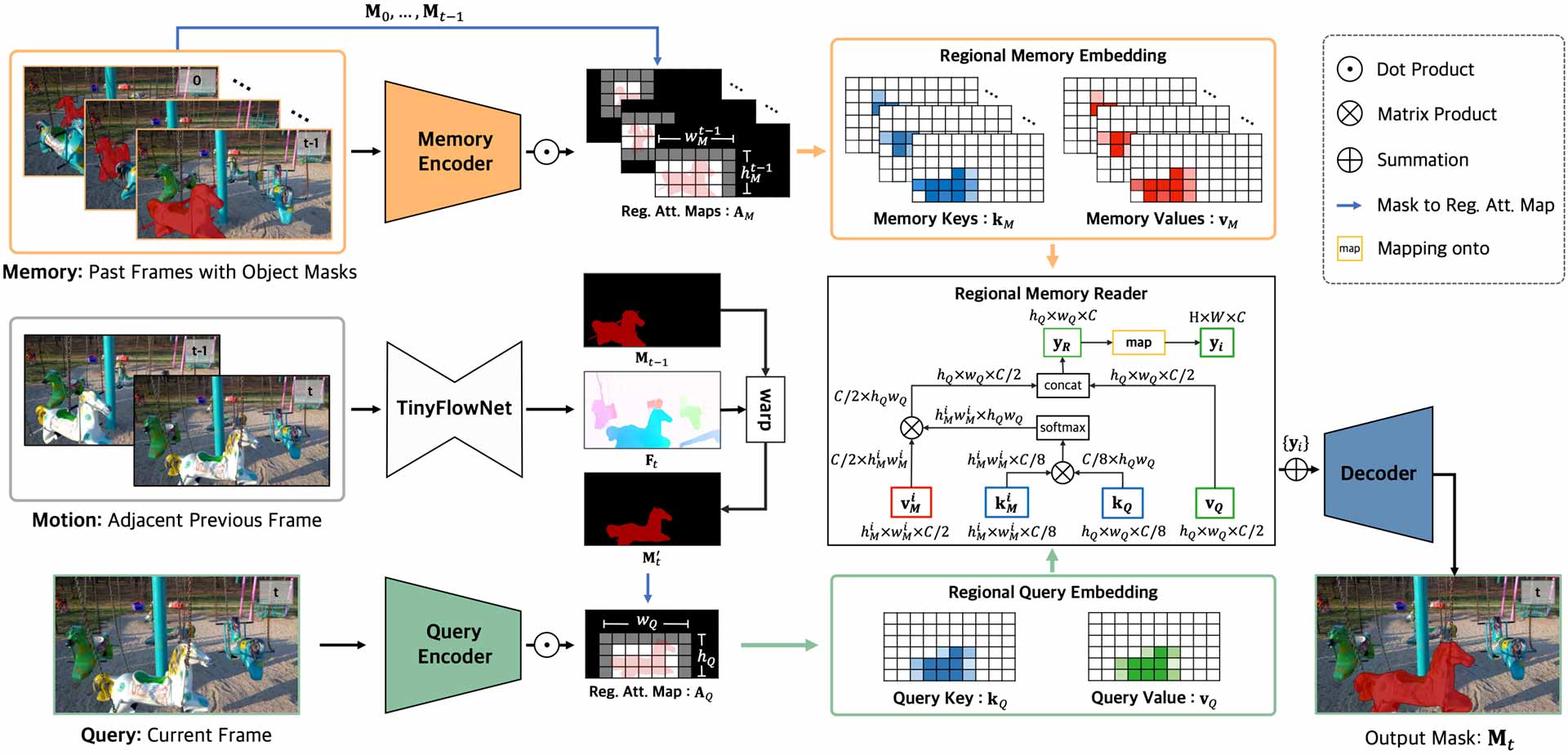

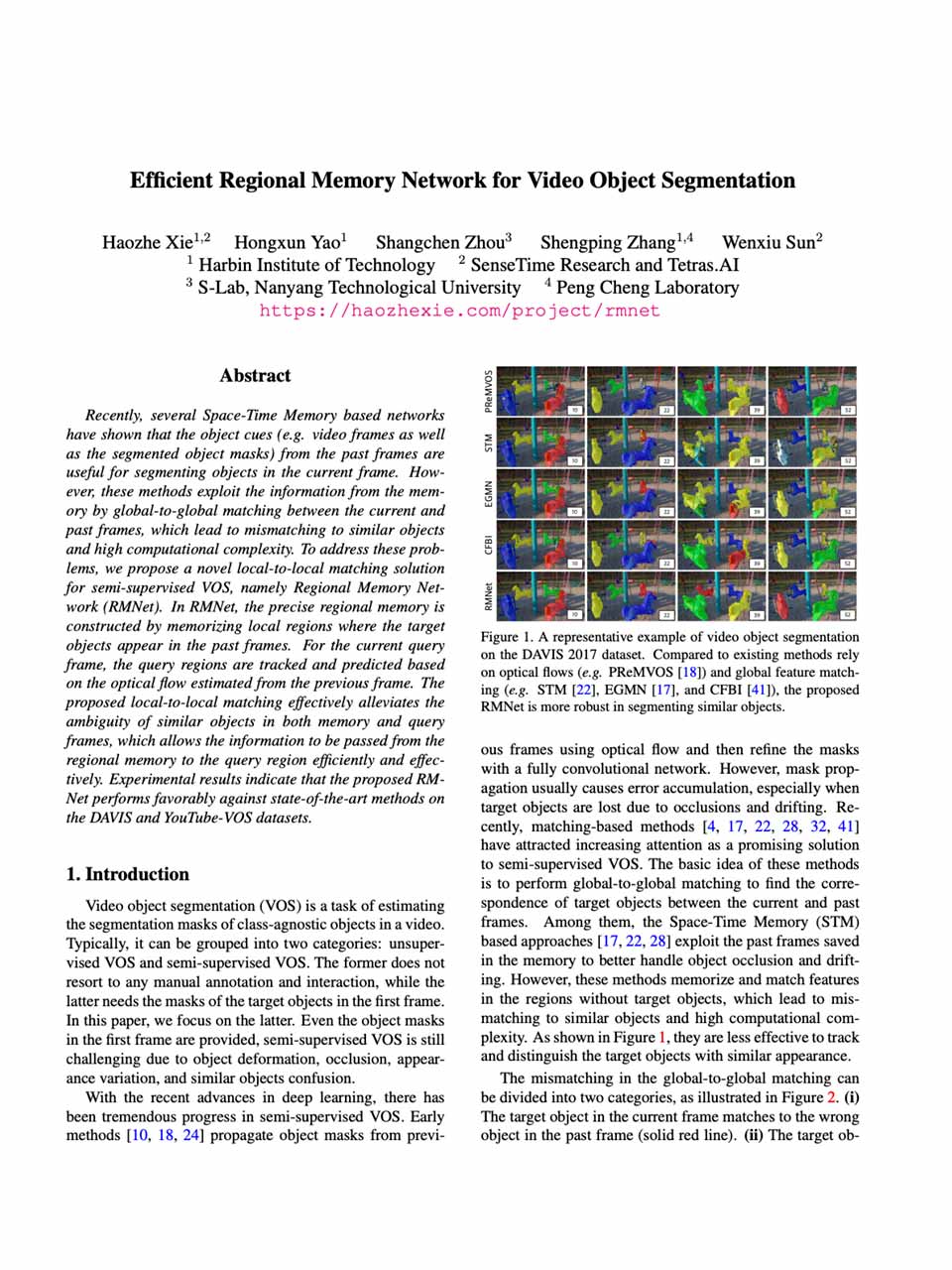

Recently, several Space-Time Memory based networks have shown that the object cues (e.g. video frames as well as the segmented object masks) from the past frames are useful for segmenting objects in the current frame. However, these methods exploit the information from the memory by global-to-global matching between the current and past frames, which lead to mismatching to similar objects and high computational complexity. To address these problems, we propose a novel local-to-local matching solution for semi-supervised VOS, namely Regional Memory Network (RMNet). In RMNet, the precise regional memory is constructed by memorizing local regions where the target objects appear in the past frames. For the current query frame, the query regions are tracked and predicted based on the optical flow estimated from the previous frame. The proposed local-to-local matching effectively alleviates the ambiguity of similar objects in both memory and query frames, which allows the information to be passed from the regional memory to the query region efficiently and effectively. Experimental results indicate that the proposed RMNet performs favorably against state-of-the-art methods on the DAVIS and YouTube-VOS datasets.

Citation

@inproceedings{xie2021efficient,

title={Efficient Regional Memory Network for Video Object Segmentation},

author={Xie, Haozhe and Yao, Hongxun and Zhou, Shangchen and Zhang, Shengping and Sun, Wenxiu},

booktitle={CVPR},

year={2021}

}

Spotlight Video

License

This project is open sourced under MIT license.

The Disqus comment system is loading ...

If the message does not appear, please check your Disqus configuration.